A study of skin cancer detection using image analysis and machine learning

Gautham Praveen Salini

North Trail High School

Grade 11

Presentation

No video provided

Problem

AI image detection can be used for many purposes across various fields, one area where this could help improve people's lives is using image detection to identify skin cancer. At the moment skin cancer diagnoses are made through two steps, the first step being a visual inspection by a qualified physician and step two being a skin biopsy (there are various types) which often takes 1 to 3 weeks. The accuracy of Melanoma Detection can be 75%-85% even though the experts in skin use dermoscopy as a method for diagnosis. Skin cancer tends to gradually spread over other body parts, so it is more curable in initial stages, which is why it is best detected at early stages. Through using AI for image detection, we can help shorten the time it takes for a physician to make a skin cancer diagnosis and provide a second source of verification.

So this leads to the question, how do AI models conduct image detection to come to conclusions ? What processes are taking place on the backend of the system ? How can these models be improved to increase accuracy ? What models provide the best output and how can these models be further improved ?

Method

Analyzing multiple AI models to determine which one is the most accurate

I have used google scholar to find articles about image detection using AI and have given preference to the most recent articles in order to make sure that the data and other components used in these articles are as recent as possible.

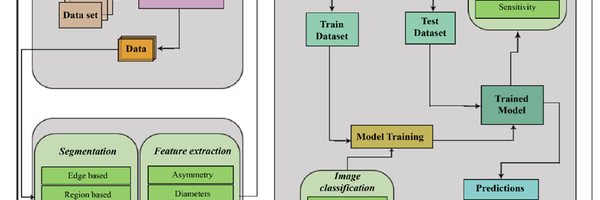

General Process breakdown

Image preprocessing Image preprocessing is important as it checks where there is inconsistent data and noise and works towards fixing it. This is an essential step as it improves the quality of the data and in turn improves the models performance. The first part of image processing is image cleanup. All images are resized to their correct size and dimensions. The next step is noise removal which in this scenario is the hair that is present around the melanoma. This is removed using various tools such as blackhat filtering (highlights the dark features in an image and illuminates them) and inpainting (this works as a patching tool to cover the empty space, with pixels that are similar to the ones surrounding the missing area).

Feature Extraction Extracting certain features such as Asymmetry, Diameters,compactness,borders, blue-white veil. All help identify whether a tumor is malignant or beningn.

Classification Using AI and machine learning to identify skin cancer In order to identify malignant tumors from non - cancerous benign tumors.Many styles of machine learning and deep learning models are used. The most common style being the use of Neural Networks. Neural networks are used in machine learning to help identify patterns such as malignant and tumors.

Evaluations and characteristics of various models Each model is evaluated using the accuracy of its predictions. We will compare the accuracy of these models to then identify which one is the most effective at successfully identifying skin cancer. The characteristics of each model will be compared to identify why certain models are more efficient than others.

Research

Neural Networks

Neural networks are used in machine learning to help identify patterns such as malignant and tumors. Neural networks are inspired by the human brain. Neural networks are composed of node layers and each node is composed of input data, weights, a bias, threshold and an output. Data is passed from one node to another and neural networks use linear regression models where there are independent variables that come together to predict an outcome. Neural networks rely on training data, accuracy is measured using cost function, the goal is to reduce cost function (reduce errors). Most neural networks have three general layers, an input layer, a second layer or hidden layer and finally an output layer. The input layer receives raw input data. The secondary layers do the majority of the computing, this is where the weights, biases and thresholds are used to process inputs, a neural network may have one or multiple hidden layers. The last layer is the output layer which generates the final output such as classification results.

SL_ImageNet

SL_ImageNet is a training strategy where an AI model is first trained on large, labeled image datasets such as ImageNet-21k, where it is learned by attempting to guess the image and being corrected by a human. The ImageNet-21k has 21,000 different categories and training on this massive dataset gives the AI a broad general knowledge. It then uses a conventional transfer learning strategy where the neural network backbone is pretrained on the ImageNet-21k dataset. While the specific backbone can vary (e.g., ResNet, ViT, or ConvNeXt), the SL_ImageNelabel denotes the supervised pretraining strategy. Now you can take that "smart" brain and retrain it on a new, smaller task (like identifying different types of skin cancer).

DINOv2 (self-Distillation with NO labels)

DINOv2 is a self supervised learning model that was trained using 142 million high quality, balanced images. This learning model trains itself using two parts, the first part being a part that knows the complete image and a second part that only knows a small portion of the image. This runs until the second part can successfully predict the imager that the first part has given. This allows for the model to understand context, where a small portion of an image can help it predict the whole image, (this is a vision transformer,ViT) . This pretraining helps the model become an expert at identifying specific things very quickly (in this case melanoma).

SwAV_Derm

SwAV_Derm is a framework that is designed to master image recognition by mixing self-supervised learning and fine tunings by humans. In the first stage it uses the ResNet101 neural network to perform a “pretext task” using SwAV (Swapping Assignments between multiple Views). During this phase the model analyzes a massive dataset of unlabelled defect images, learning to identify consistent patterns and features on its own by comparing different views on the same data. The next stage, the model is fine tuned using human labelled data, allowing it to transition from general pattern recognition to specific pattern recognition (such as the identification of skin cancer, from the photo of a person's skin).

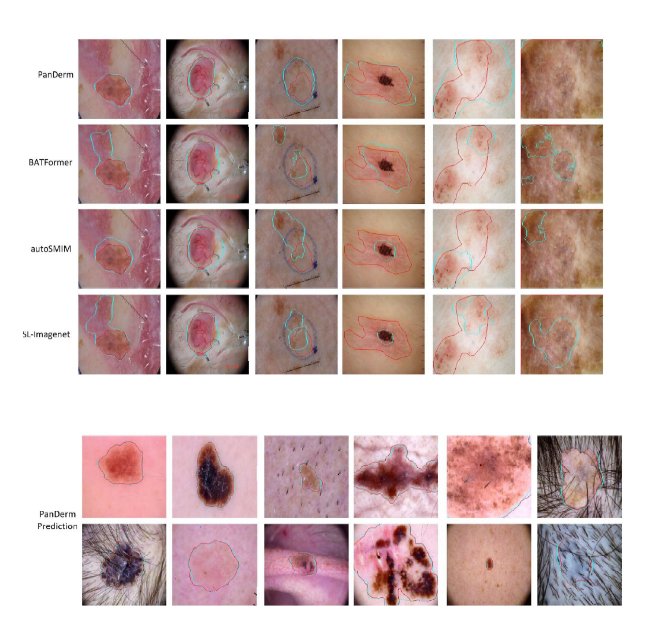

PANDERM – multi model process flow

PanDerm is a multimodal dermatology foundation model, pretrained through self supervised learning on a data set of over 2 million real world clinical images .It is sourced from 11 clinical institutions, the model integrated four distinct imaging Modalities (1) Dermoscopy (2) Clinical Photography (3) Dermatopathology (Biopsy slides) (4) 3D Total Body Photography (TBP) PanDerm trains itself by intentionally "hiding" pieces of a skin image and forcing the system to predict what the missing sections should look like. To sharpen its accuracy, it cross-references its predictions with CLIP, a powerful AI "tutor" that helps the model align these visual patterns with meaningful medical concepts.

Advantages of Panderm

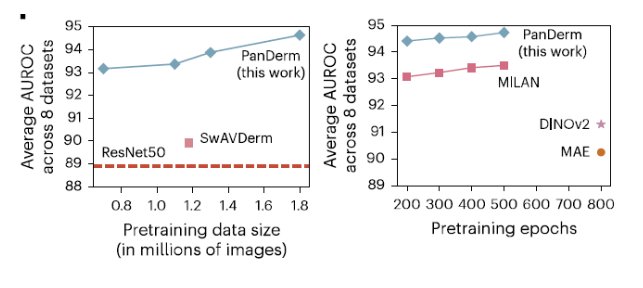

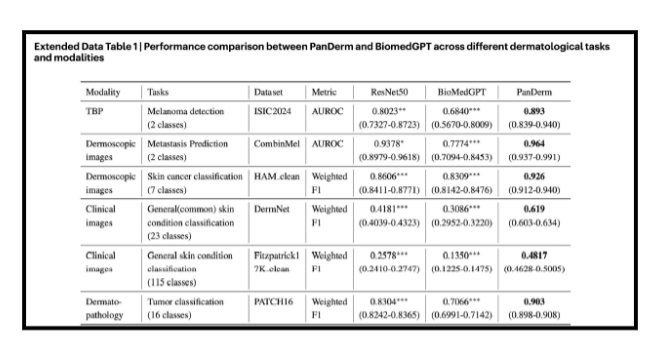

Once these models and their outputs have been compared, it is evident that Panderm is the most efficient and correct when it comes to predicting skin cancer.

Advantages of Panderm

- Only 5-10% of labelled training data is required (limited training data)

- Showed 20% better AUROC (Area Under the Receiver Operating Characteristic curve, this is a performance metric used to measure how effectively a diagnosing tool is ) score in melanoma detection

- Shows a 34.7% higher weighted F1 score in differentiating skin conditions.. (F1 is a balanced average of both precision and recall )

- Surpasses the next best model by 4.7%

- Shows a strong capability to identify complex, multi

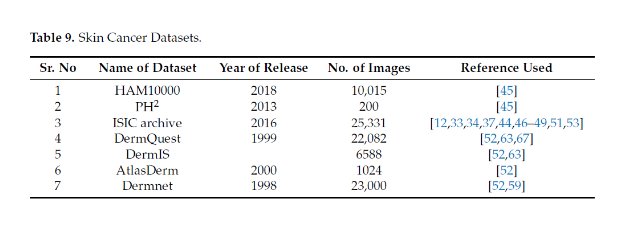

Data

Panderm Outperforms existing models on 28 evaluation test across 4 modalities

To see how well it works in the real world, PanDerm was put to the test against thousands of clinical images. It successfully identified 23 common skin issues using a public database and correctly spotted up to 74 different conditions in two private datasets(– MMT-09 and MMT-74).

Conclusion

Challenges of existing Machine Learning Algorithms and how PanDerm Overcomes it.

1) Extensive Training – system must undergo detailed training, which is a time- consuming process and demands extremely powerful hardware. PanDerm achieves peak performance in 200 epochs while other models take 500 to 800 epochs. It significantly reduces extensive training and hardware demand.

2) Variation in Lesion Sizes - smaller lesions of 1mm or 2mm in size, was much more difficult and error-prone. PanDerm model identifies areas of interest (lesions) regardless of size and "attends" to them with higher pixel density processing using spatial attention modules.

3) Images of Light Skinned People in Standard Datasets. Traditional datasets have insufficient lesion images of dark-skinned people that are vital for the accuracy of skin cancer detection systems. PanDerm utilizes colour space decoupling or stain normalization. It separates the “Luminance” (brightness/tone) from the “Chrominance” (color/texture)

4) Small Interclass Variation in Skin Cancer Images. Some skin lesions are extremely hard to classify. PanDerm uses fine grained visual classification .It identifies tiny irregularities that standard models ignore. 5) Unbalanced Skin Cancer Datasets Real-world datasets used for skin cancer diagnosis are highly unbalanced. Unbalanced datasets contain a very different number of images for each type of skin cancer. PanDerm uses a weighted loss function .If the model misclassifies a rare cancer, the model is forced to prioritize the minority class.

6) Lack of Availability of Powerful Hardware The lack of availability of high computing power is a major challenge in deep-learning based skin cancer detection training. PanDerm utilizes Self-Supervised Learning and Masked Latent Modeling. This allows the model to learn better feature representations from unlabeled data much faster. Research shows that PanDerm matches the world's best models using 10% of the labelled data .

7) Lack of Availability of Age-Wise classification of Images In Standard Datasets. PanDerm integrates age into the final decision layer of the neural network . It resolves the misclassification of images based on the age blind models .

8) Analysis of Genetic and Environmental Factors. Current models are limited to isolated image tasks and don't consider patients age, history or genetic data. PanDerm processes dermoscopy images in one branch and a patient feature vector like Genetic Marker ,Family History of melanoma ,UV index of their region etc. PanDerm combines all the patient's information before reaching a conclusion. If the records show a high genetic risk, the system automatically flags the case for a biopsy recommendation.

Citations

A multimodal vision foundation model for clinical dermatology. Nat Med 31, 2691–2702 (2025).

TRM-ViT: A Tiny Recursive Vision Transformer for Efficient Melanoma Detection

Skin Cancer Detection: A Review Using Deep Learning Techniques

Melanoma Skin Cancer Detection using Image Processing and Machine Learning

Acknowledgement

I would like to thank my family for all the support given .Also I would like to thank my friends who attended CYSF projects before that gave me inspiration to start preparing a science project. Finally I would like to thank all the authors and research fellows who created wonderful articles that I have used .